How to Create AI Policies

Artificial Intelligence (AI) is quickly transforming the way businesses operate, providing opportunities for automation, data-driven decision-making, and improved efficiency. However, with great power comes great responsibility. Organizations adopting AI must create clear AI policies to ensure ethical, legal, and responsible usage.

A well-structured AI policy helps organizations define how AI should be used, establish governance frameworks, and address concerns related to data privacy, security, accountability, compliance, and fairness. Without proper AI policies, businesses risk exposing themselves to legal liabilities, ethical dilemmas, and operational disruptions.

Why Does Your Business Need an AI Policy?

Before diving into policy creation, let’s explore why AI policies are crucial for organizations:

1. Regulatory Compliance

Governments and regulatory bodies worldwide are introducing AI-related regulations such as GDPR, CCPA, the EU AI Act, and emerging U.S. federal AI guidelines. An AI policy ensures that organizations comply with these evolving laws.

2. Usage Approval

Determines which AI platforms, cloud services, software, plug-ins, and functionality may be used at the organization, and for what.

3. Ethical AI Usage

AI must be used responsibly to avoid biases, discrimination, and privacy violations. A policy defines ethical standards and accountability measures for AI-driven decision-making.

4. Data Privacy and Security

AI or Large Language Models (LLM) relies on vast amounts of data, making data protection and cybersecurity essential. AI policies help businesses outline how data is collected, stored, and used responsibly. These policies determine if your business can use software with international data concerns like Deepseek.

5. Risk Management

Poorly managed AI can lead to incorrect predictions, unintended consequences, and reputational damage. A solid policy helps mitigate these risks.

On July 26, 2024, NIST released NIST-AI-600-1, Artificial Intelligence Risk Management Framework: Generative Artificial Intelligence Profile. The profile can help organizations identify unique risks posed by generative AI and proposes actions for generative AI risk management that best aligns with their goals and priorities.

The U.S. Department of State released a “Risk Management Profile for Artificial Intelligence and Human Rights” as a practical guide for organizations—including governments, the private sector, and civil society—to design, develop, deploy, use, and govern AI in a manner consistent with respect for international human rights.

6. Organizational Alignment

An AI policy ensures that AI initiatives align with business goals, values, and corporate strategies, keeping AI use consistent across departments.

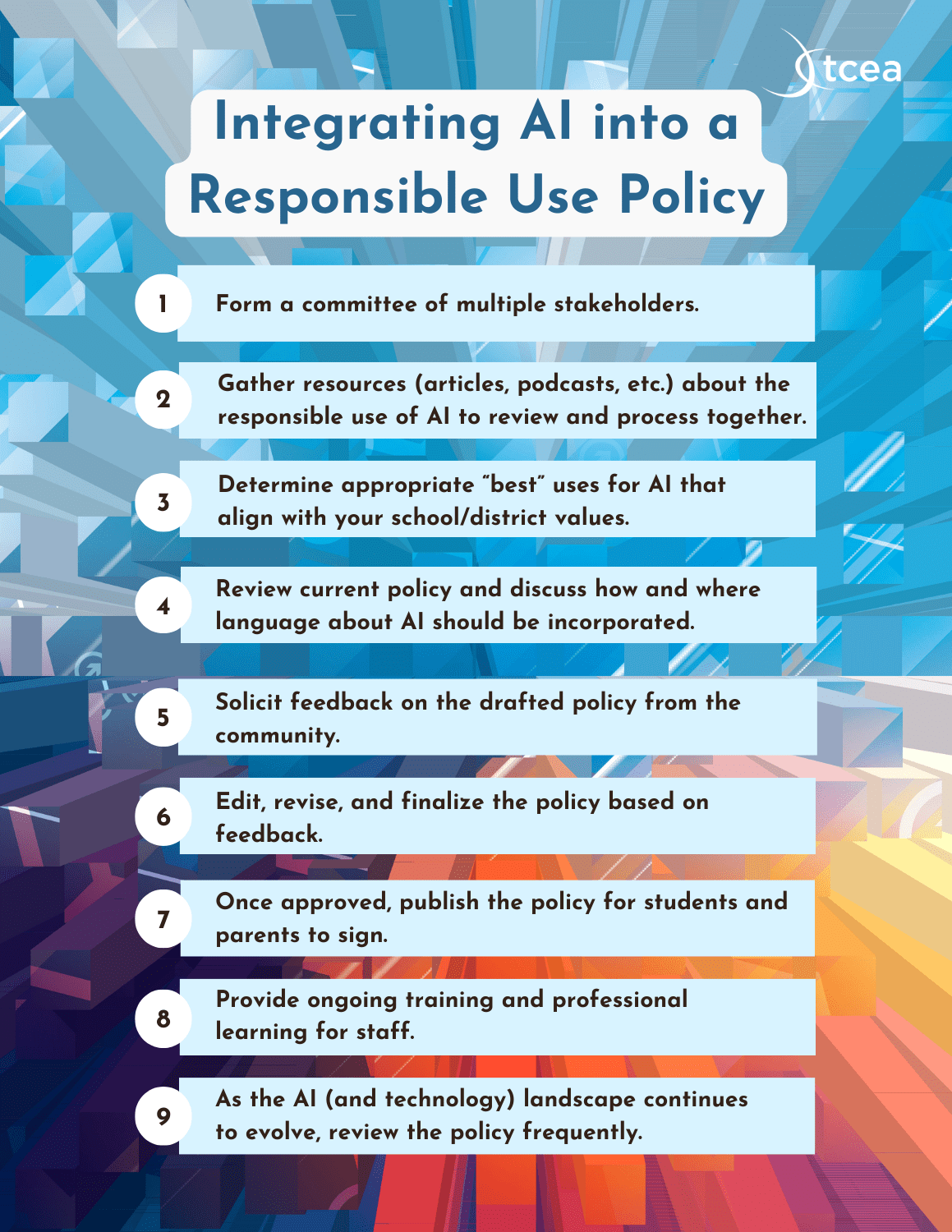

Step-by-Step Guide to Create AI Policies

Step 1: Define the Scope of AI Usage

The first step in creating an AI policy is to clearly define how AI is being used within your organization.

⚖️ Discover what AI technologies are currently in use? (e.g., chatbots, predictive analytics, automation tools)

⚖️ Which departments rely on AI? (e.g., HR for hiring, marketing for personalization, finance for fraud detection)

⚖️ What AI projects are planned for the future and is there an AI transformation plan?

⚖️ How does AI interact with customer and employee data?

By outlining AI use cases, your policy will focus on the most relevant concerns while remaining adaptable to future AI innovations.

Step 2: Establish AI Governance and Accountability

AI governance ensures that AI systems operate safely, fairly, and transparently. Define who is responsible for AI oversight and how AI decisions are reviewed.

⚖️ AI Governance Committee: Form a dedicated team or assign a Chief AI Officer to oversee AI-related policies and compliance.

⚖️ Accountability Framework: Determine who is responsible if AI systems make errors or unintended decisions.

⚖️ Human Oversight: Specify when AI-generated decisions require human review.

This structure ensures that AI is monitored, adjusted when necessary, and aligned with business ethics.

Step 3: Address Data Privacy and Security Concerns

AI policies must include strong data protection measures to ensure AI systems handle personal and sensitive information securely.

⚖️ Data Collection: Clearly state what data AI collects and why.

⚖️ Data Storage & Retention: Define how long AI-generated data is stored and when it is deleted.

⚖️ Data Protection: Implement encryption, access controls, and security measures.

⚖️ User Consent: Ensure compliance with data privacy laws (e.g., GDPR and CCPA) by obtaining user consent before collecting personal data.

This step protects customer trust, reduces legal risks, and ensures AI-driven processes are compliant with data privacy regulations.

Step 4: Implement Bias Mitigation and Fairness Controls

AI algorithms can unintentionally develop biases, leading to unfair outcomes, discrimination, or ethical concerns. Organizations must ensure AI operates fairly and transparently.

⚖️ Bias Testing: Regularly audit AI models to detect and remove biases.

⚖️ Fairness Standards: Establish rules to ensure AI decisions do not discriminate based on race, gender, age, or other protected categories.

⚖️ Explainability: AI decisions should be explainable and understandable. Avoid “black box” AI models where users cannot see how decisions are made.

By proactively addressing bias, companies can prevent reputational damage and build trust in AI systems.

Step 5: Ensure AI Transparency and Explainability

AI should not operate as a mystery—employees and customers should understand how AI makes decisions.

⚖️ Disclose AI Use: Inform users when they are interacting with AI (e.g., automated customer support, AI-driven hiring tools).

⚖️ Provide AI Decision Justifications: AI should be able to explain why it made a certain recommendation or decision.

⚖️ Enable User Control: Allow users to correct AI errors, override AI decisions when necessary, and opt out of AI-driven interactions.

Transparency helps businesses build credibility and trust in AI technologies.

Step 6: Align AI Usage with Legal and Compliance Requirements

AI policies must align with industry regulations, global AI laws, and internal corporate policies.

⚖️Follow AI Regulatory Guidelines (e.g., GDPR, HIPAA, PCI DSS, AI ethics frameworks).

⚖️Maintain Audit Trails: Keep logs of AI activity for accountability and compliance audits.

⚖️Conduct Compliance Training: Educate employees on AI risks and responsibilities.

This step ensures your business remains compliant, avoids legal consequences, and protects stakeholders.

Step 7: Define AI Risk Management and Incident Response

AI errors, cyber threats, and misuse of AI are potential risks. Your policy should outline how your company manages AI-related risks.

⚖️ AI Risk Management: Identify, assess, and mitigate AI risks before they cause harm.

⚖️ Incident Response Plan: Have a clear process for handling AI failures, cybersecurity breaches, or unethical AI behavior.

⚖️ Continuous AI Monitoring: AI systems should be regularly tested, updated, and monitored for potential issues.

A structured risk management plan helps organizations react swiftly to AI-related challenges.

Step 8: Establish AI Training and Awareness Programs

To ensure AI policies are followed, employees must be trained on responsible AI usage.

⚖️ AI Ethics Training: Educate employees on responsible AI decision-making.

⚖️ Technical Training: Ensure IT teams understand how to develop, test, and deploy ethical AI models.

⚖️Cross-Departmental AI Education: Train HR, legal, marketing, and finance teams on AI policies relevant to their roles.

A well-informed workforce reduces AI risks and ensures ethical AI adoption.

Create AI Policies to Enable Responsible AI Growth

AI is a powerful tool, but without proper policies, it can introduce ethical, legal, and operational risks. Organizations must proactively establish AI governance, data privacy measures, bias mitigation strategies, and compliance frameworks to ensure AI operates safely and fairly.

DAG Tech’s CxO AI Policy consulting services helps businesses develop, implement, and monitor AI policies tailored to their needs.

Our AI policy consulting services include:

✔ AI Readiness Assessments – Evaluating AI risks, governance needs, and compliance gaps.

✔ Custom AI Policy Consulting and Development – Drafting company-wide AI guidelines.

✔ AI Governance Frameworks – Ensuring AI aligns with business goals and legal standards.

✔ Bias Audits and Fairness Testing – Detecting and mitigating AI biases.

✔ AI Security and Compliance Solutions – Protecting AI systems from cyber threats and regulatory violations.

By implementing strong AI policies, businesses can embrace AI with confidence, unlock innovation, and ensure AI-driven success.

Want to create AI policies for your business? Contact DAG Tech today for AI Policy consulting!